Tag: "Reiser4"

In an ageing reiser4 development environment where I still build Metztli Reiser4 and, recently, Metztli Reiser5 kernels and hack the Debian Installer (d-i) minimal netboot images that I make available in SourceForge site, I began to notice systemd errors in dmesg logs.

...[ 3821.858183] systemd[1]: systemd-journald.service: Main process exited, code=killed, status=6/ABRT

[ 3821.858285] systemd[1]: systemd-journald.service: Failed with result 'watchdog'.

[ 3821.859532] systemd[1]: systemd-journald.service: Scheduled restart job, restart counter is at 1.

[ 3821.860945] systemd[1]: Stopping Flush Journal to Persistent Storage...

[ 3821.870796] systemd[1]: systemd-journal-flush.service: Succeeded.

[ 3821.871516] systemd[1]: Stopped Flush Journal to Persistent Storage.

[ 3821.872296] systemd[1]: Stopped Journal Service.

[ 3821.875407] systemd[1]: Starting Journal Service...

[ 3912.076346] systemd[1]: systemd-journald.service: start operation timed out. Terminating.

[ 4002.329312] systemd[1]: systemd-journald.service: State 'stop-sigterm' timed out. Killing.

[ 4002.329476] systemd[1]: systemd-journald.service: Killing process 11100 (systemd-journal) with signal SIGKILL.

[ 4092.582238] systemd[1]: systemd-journald.service: Processes still around after SIGKILL. Ignoring.

[ 4182.835076] systemd[1]: systemd-journald.service: State 'final-sigterm' timed out. Killing.

[ 4182.835156] systemd[1]: systemd-journald.service: Killing process 11100 (systemd-journal) with signal SIGKILL.

[ 4224.746769] systemd[1]: systemd-journald.service: Main process exited, code=killed, status=9/KILL

[ 4224.746796] systemd[1]: systemd-journald.service: Failed with result 'timeout'.

[ 4224.748891] systemd[1]: Failed to start Journal Service.

[ 4224.749509] systemd[1]: Dependency failed for Flush Journal to Persistent Storage.

[ 4224.749605] systemd[1]: systemd-journal-flush.service: Job systemd-journal-flush.service/start failed with result 'dependency'.

[ 4224.753055] systemd[1]: systemd-journald.service: Scheduled restart job, restart counter is at 2.

[ 4224.754325] systemd[1]: Stopped Journal Service.

[ 4224.756880] systemd[1]: Starting Journal Service...

[ 4239.468189] systemd[1]: Started Journal Service....

I was already using Debian Buster backports and there was no Debian Systemd package, nor source, newer than what I was using. Moreover, the systemd issue was introducing peculiar erratic computing behaviour in the GUI user land, Enlightenment Window Manager 0.24.2 in my case. So I had to fix the issue and headed to the GitHub Systemd development repository --where there were newer commits ahead of the latest official source found at Debian repositories. And I decided to give the systemd build a try, locally.After the test-acl-util stopped my build attempts I filed an issue: systemd build fails on reiser4 4.0.2 #17013. I was not sure if my issue would get noticed for this was my first incursion into building systemd and likely not many individuals build on reiser4. Luckily, on the next day I received a comment from a dedicated person, whose last comment included the phrase I used as the topic of this post. He was kind enough to modify and commit a few lines of code so that file systems which do not currently support ACLs, like reiser4, would be able to skip the test-acl-util during the build procedure. I closed the issue, subsequently.

That made my systemd build go further; yet, my build was experiencing TIMEOUTS which failed the build procedure:

test-journal TIMEOUT 30.32 s

...

463/562 test-journal-stream TIMEOUT 30.17 s

...

468/562 test-journal-interleaving TIMEOUT 31.02 sI tried the systemd build in another relatively clean partition on this same ageing hardware with same fail result. After multiple failures in both local reiser4 partitions I reopened the issue erroneously believing that if the test code and headers were completely on systemd git repository probably -- just probably -- the developers never envisioned any reiser4 peculiarities. I arrived at that irrational conclusion by analyzing some of the source files involved -- where I noted some comments to accommodate a btrfs quirk.

On the next day I saw my reopened issue closed again by a different systemd contributor. Well, I thought that was it and I began to explore the possibility of installing OpenRC, after reading an older article in Linux Mafia (link to non-SSL site). Researching further, I was pleasantly surprised that some well known Debian developers maintain OpenRC and decided to give it a try and build it as a potential replacement for my currently failing systemd. Fact is OpenRC is hosted at GitHub, too, and the source readily built under the Debian packaging framework in my reiser4 environment. OpenRC was not a clean Systemd replacement as I had to spend some time visiting Devuan (Debian without Systemd) and ripping out the dbus innards from Beowolf to use in my quest. And then stripping the Systemd references in Pulseaudio source and Debian packaging to fix an Enlightenment volume control application issue. But the replacement worked:

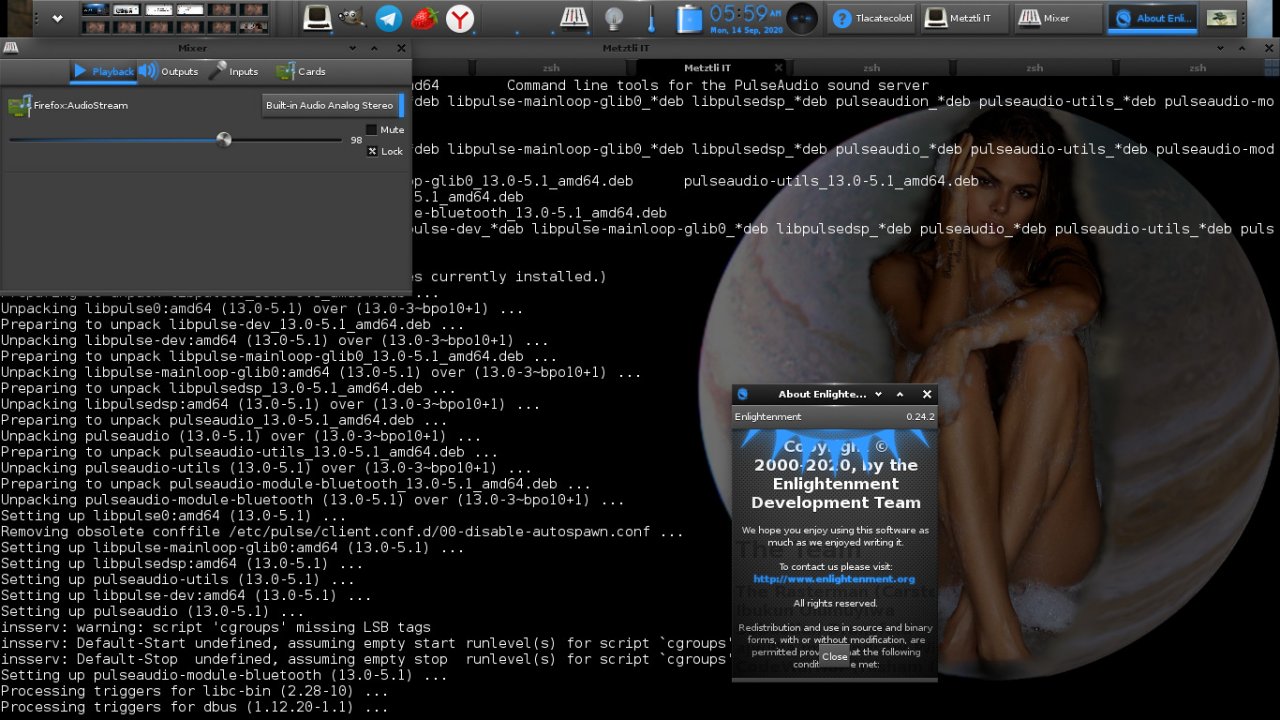

Installing non-systemd Pulseaudio Notwithstanding, a day later I got another message from the first person who committed the skip fix for non-acl supporting file systems in the GitHub Systemd master code repository; the person made the suggestion, 'maybe reiser4 is just terribly slow for this access pattern?', i.e., the topic of this post. And I decided to dispel any doubts about systemd build on reiser4 once and for all.

I have a spare ~200Gb custom Metztli Reiser4 Zstd transparent compression disk snapshot saved in the cloud, more specifically Google Cloud Platform (GCP). First, I generated a Google Compute Engine (GCE) disk with my saved disk snapshot, moiocoiatzin-epyc-zstd-cloud-kernel, in the US Central1 region where I could create an AMD Epyc -based cloud instance.

Shell

gcloud compute disks create moiocoiatzin --description "AMD64 Rome Epyc" --labels=amd64=epyc --source-snapshot=moiocoiatzin-epyc-zstd-cloud-kernel --zone us-central1-aSubsequently, I created an instance with moiocoiatzin -- an allusion to 'Entity who self-creates, self-thinks, self-invents, or wills, Himself into Being', i.e., the disk we emerged 'a priori':

Shell

gcloud beta compute instances create iztaccihuatl --disk name=moiocoiatzin,mode=rw,boot=yes --metadata enable-guest-attributes=TRUE --metadata-from-file startup-script=./local-directory/reghostkey.sh --tags chingon --machine-type=n2d-standard-8 --zone us-central1-aAnd named the cloud instance as Iztaccihuatl -- an allusion to the real Mexicah's notion of a 'White woman' in reference to a mountain range / volcano in the valley of their eponymous city-state which, when covered with snow, projects the shape of a sleeping woman underneath. Other options include the disk properties read/write and must have the ability to boot. Our Debian -based Metztli Reiser4 cloud images are created and customized for the Google Compute Engine; thus, for security of whoever uses our images, I remove the /etc/ssh/ssh_host_* files as these are regenerated upon booting the instance with a script, say reghostkey.sh, which contents might be:

#! /bin/bash

# Regenerates deleted host keys upon GCE image first boot

sudo su -

apt-get -t buster-backports update

dpkg-reconfigure --frontend=noninteractive openssh-server

##/etc/init.d/ssh restart

systemctl try-reload-or-restart sshObviously, if our cloud image were running openrc -- and not systemd -- we would uncomment the second to last directive and comment out the last one.

I am also specifying the machine type as an AMD Epyc (N2D) with eight(8)-cores and 32 Gb RAM, i.e., standard.

After upgrading the older software which came from the snapshot -- including the reiser4 kernel labeled as 'reizer4' to distinguish it from tlilxochitl, i.e., vanilla, Intel reiser4 kernels, I uploaded my local systemd master source code repository which my ageing eight(8) core / 16 Gb RAM machine was unable to build.

As can be seen in the media, the Metztli Reiser4 environment in the cloud completed the systemd build in approximately seven(7) minutes. And I can certainly assert that the systemd build issues that I experienced were not because 'reiser4 is just terribly slow for this access pattern' but, rather, those issues were due to my ageing local hardware platform.

Image reference: Вики Одинцова : Viki Odintcova, aka, Вики Xochiquetzal

NOTE: Although the commands and/or code discussed above generally work well, please consider these are for illustrative purposes. and are provided AS-IS without any implicit/explicit assurance as to their correctness.

Reiser5 (Format Release 5.X.Y)2

Local volumes with parallel scaling out.

O(1) space allocator.

User-defined and built-in data distribution and

transparent migration capabilitiesI am happy to announce a brand new method of aggregation of block devices into logical volumes on a local machine. I believe, it is a qualitatively new level in file systems (and operating systems) development - local volumes with parallel scaling out (PSO).

Reiser5 doesn't implement its own block layer like ZFS etc. In our approach scaling out is performed by file system means, rather than by block layer means. The flow of IO-requests issued against each device is controlled by user. To add a device to a logical volume with parallel scaling out, you first need to format that device -- this is the difference between parallel and non-parallel scaling at first glance. The principal difference between parallel and non-parallel scaling out will be discussed below.

Systems with parallel scaling out provide better scalability and resolve a number of problems inherent to non-parallel ones. In logical

volumes with parallel scaling out devices of smaller size and(or) throughput don't become a "bottlenecks", as it happens e.g. in RAID-0

and its popular modifications.

I. Fundamental shortcomings of logical

volumes composed by block-layer means- Local file systems don't take participation in scaling out. They just face a huge virtual block device, for which they need to maintain a free space map. Such maps grow as the volume fills with data. It results in increasing latency on free blocks allocation and consequently in essential performance drop on large volumes which are almost full.

- Loss of disk resources (space and throughput) on logical volumes composed of devices with different physical and geometric parameters (because of poor translations provided by "classic" RAID levels). Low-performance devices become a bottleneck in RAID arrays. Attempts to replace RAID levels with better algorithms lead to inevitable and unfixable degradation of logical volumes. Indeed, defragmentation tools work only on the space of virtual disk addresses. If you use classic RAID levels, then everything is fine here: reducing fragmentation on virtual device always results in reducing fragmentation on physical ones. However, if you use more sophisticated translations to save disk space and bandwidth, then fragmentations on real devices tends to accumulate, and you are not able to reduce it just defragmenting the virtual device. Note that the interest is always real devices - no one actually cares what happens on virtual ones.

- With only block layer means it is impossible to build heterogeneous storage composed of devices of different nature. You are not able to use different approaches to devices-components of the same logical volume (e.g. defragment only rotational drives, issue discard requests only for solid state drives, etc).

- It is impossible to efficiently implement data migration on logical volumes composed by block-layer means.

II. The previous artPreviously, there was only one method for scaling out local volumes - by block layer means. That is, file system deals only with virtual disk addresses (allocation, defragmentation, etc), and the block layer translates virtual disk addresses to real ones and backward. The most common complaint is about performance drop on such logical volumes, which are large and more than 70% full.

Mostly it is related to disk space allocators, which are, to put it mildly, not excellent, and introduce big latency when searching for a free blocks on extra-large volumes. Moreover, nothing better has been invented for the past 30 years. Also, it easily may be that the best

algorithms for free space management simply do not exist.Some file systems (ZFS and like) implement their own block layers. It helps to implement a failover, however, the mentioned problem doesn't disappear - if the block layer does its job very well, then the file system, again, faces a huge logical block device, which is hard to handle.

Significant progress in scaling out was made by parallel network file systems (GPFS, Lustre, etc). However it was unclear, how to apply

their technologies to a local FS. Mostly, it is because local file systems don't have such luxury like "backend storage" as the network

ones do. What local FS does have - is only extremely poor interface of interaction with the block layer. For example, in Linux local FS can only compose and issue an IO request against some buffer (page). In other words, it was unclear, what a "local parallel file system" is.

III. Our approach

O(1) space allocator

~9 years ago I had realized that the first approach (implementation an own block layer inside a local FS) is totally wrong. Instead we need to pay attention to parallel network file systems to adopt their methods. However, as I mentioned, there is no something even close to a direct analogy - it means that for local FS we need to design "parallelism" from scratch. The same about distribution algorithms - I am totally unhappy with existing ones. Of course, you can deploy a networking FS on the same local machine for a number of block devices, but it will be something not serious. I state that a serious analogy can be defined and implemented in properly designed local FS - meet Reiser5.

The basic idea is pretty simple - to not mess with large free space maps (which sizes depend on the volume size). Instead, we need to

manage many small ones of limited size. At any moment the file system should be able to pick up a proper such small space map, and work only with it. Needless to say, that for any logical volume, which is as big as you want, search time in a such map will be also limited by some value, which doesn't depend on logical volume size. For this reason, we'll call it O(1) - space allocator.The simplest way is to maintain one space map per each block device, which is a component of the logical volume. If some device is too large, simply split it into a number of partitions to make sure that any space map does not exceed some upper limit. Thus, users also

should put some efforts from their side to make the space allocator be O(1).

Parallel scaling out as disk resources conservation

Definitions and examples.Here we'll consider an abstract subsystem S of the operating system managing a logical volume composed of one, or more removable

components.Definition 1. We'll say that S saves disk space and throughput of the logical volume, if

- ) its data capacity is a sum of data capacities of its components

- ) its disk bandwidth is a sum of disk bandwidths of its components. We'll say that LV managed by such system is with parallel scaling out (PSO).

There is a good analogy to understand the feature of PSO: imagine that it rains and you put several cylindrical buckets with different sized holes for collecting water. In this example raindrops represent IO-requests, the set of bucket represents a logical volume. Note that amount of water felt to each bucket is proportional to the square of its hole (considered as throughput). In this example all buckets are filled with water evenly and fairly: if one bucket is full, then other ones is also full. Note, that non-cylindrical form of buckets will likely break fairness of water distribution between them, so that PSO won't take place in this case.

In practice, however, IO-systems are more complicated: IO requests are distributed, queued, etc. And conservation of disk resources usually doesn't take place: disk bandwidth of any logical volume turns out to be smaller than the sum of ones of its components. Nevertheless, if the loss of resources is small, and doesn't increase with the growth of the volume, then we'll say that such system features parallel scaling out.

In complex IO-systems "leak" of disk bandwidth has complex nature and can happen on every its subsystem: on the file system, on the block layer, etc. The loss can also be caused by interface properties, etc.. The fundamental reason of almost all resource leaks is that mentioned subsystems were poorly designed (because better algorithms were not known at that moment, or because of other reasons).

The classic example of disk space and throughput loss is RAID arrays. Linear RAID saves disk space, but always drops disk bandwidth.

RAID-0, composed of different size and bandwidth devices, drops both, disk space and disk bandwidth of the resulted logical volume. The same is for their modifications like RAID-5. In all mentioned examples the loss of disk bandwidth is caused by poor algorithms (specifically, by the fact that IO requests are directed to every component in wrong proportions).Definition 2. A file system managing a logical volume is said to be with parallel scaling out, if it saves disk space and bandwidth of that logical volume. In other words, if it doesn't drop the mentioned disk resources.

Note that file system is only a part of an IO-subsystem. And it can easily happen that the file system saves disk resources, while the whole system is not. For example, because of poorly designed block layer,

whowhich puts IO requests issued for different block devices to the same queue on a local machine, etc.As an example, let's calculate disk bandwidth of a logical volume composed of 2 devices, the first of which has disk bandwidth 200M/sec, second - 300M/sec. We'll consider 3 systems: in the first one the mentioned devices compose linear RAID, in the second one - striped RAID (RAID-0), in the third one they are managed by a file system with parallel scaling out.

Linear RAID distributes IO requests not evenly: first we write to the first device. Once it is full, we write to the second one. Disk bandwidth of linear RAID is defined by the throughput of the device we are currently writing to. Thus it always is not more than throughput of the faster device, i.e. 300 M/sec.

Striped RAID (RAID-0) distributes IO requests evenly (but not fairly). In the same interval of time the same number N/2 of IO-requests will be issued against each device. On the first device it will be written in N/400 sec. On the second device it will be written in N/600 sec. Note that the first device is slower, therefore we should wait N/400 sec for all N IO-requests to be written to the array. So throughput of RAID-0 in our case is N/(N/400) = 400 M/sec.

FS with parallel scaling out distributes IO requests evenly and fairly. In the same interval of time the number of blocks issued against each device is N*C, where C is relative throughput of the device. Relative throughput of the first device is 200/(200+300) = 0.4. Of the second one - 300/(200+300) = 0.6

Portion of IO-requests issued for each device will be written in parallel in the same time 0.4N/200 = 0.6N/300 sec. Therefore, throughput of our logical volume in this case is N/(0.4N/200) = 500 M/sec.

The resulted table of throughput:

Linear RAID: <300 M/sec

RAID-0: 400 M/sec

Parallel scaling out FS 500 M/secAccording to definitions above any local file system built on a top of RAID/LVM does NOT possesses parallel scaling out (first, because RAID and LVM don't save disk resources, second, because latency introduced by free space allocator grows with volume. For the same reasons any local FS, which implements its own block layer (ZFS, Btrfs, etc) does NOT possesses parallel scaling out. Note that any network FS built on a top of two or more local FS managing simple partitions as backend saves disk resources.

Overhead of parallelism for local FS

As we mentioned above, the characteristic feature of any FS with PSO is that before adding a device to a logical volume you should format it. Of course, it adds some overhead to the system. However, that overhead is not dramatically large. Specifically, with reiser4 disk format40 specifications the disk overhead includes 80K at the beginning of each device-component. Next, for each device Reiser5 reads on-disk super-block and loads its(sic) to memory, Thus, memory overhead includes one persistent memory super-block (~500 bytes) per each device-component of a logical volume. That is, a logical volume composed of one million devices will take ~500M of memory (pinned). I think that a person maintaining such volume will be able to find $30 for additional memory card. That overhead is a single disadvantage of FS with PSO. At least, we don't know other ones.

Asymmetric logical volumes.

Data and meta-data bricksSo, any logical volume with parallel scaling out is composed of block devices formatted by mkfs utility. Such device has a special name "brick", or "storage subvolume" of a logical volume.

For the beginning we have implemented the simplest approach, when meta-data is located on dedicated block devices - we'll call them

"meta-data bricks". I remind that in reiser4 the notion of "meta-data" includes all kind of items (key'ed records in the storage tree). And the notion of data means unformatted blocks pointed out by "extent pointers". Such unformatted nodes are used to store bodies of regular files.Meta-data bricks are allowed to contain unformatted data blocks. Data bricks contain only unformatted data blocks. For obvious reasons such logical volumes are called "asymmetric".

Stripes. Fibers. Distribution, allocation and migration

Basic definitionsStripe is a logical unit of distribution, that is a minimal object, any parts of which can not be stored on different bricks.

A set of distribution units dispatched to the same brick is called fiber.

Comment. In the previous art fibers were called stripes (case of RAID-0), and logical units of distribution didn't have a special name. For a number of adjacent sectors forming such a unit a notion of "stripe width" was used.

Data stripe is a logical block of some size at some offset in a file.

Meta-data striping also can be defined, but we don't consider it here for simplicity.

File system block is, as usual, an allocation unit on some brick.

Stripe is said allocated, if all its parts got disk addresses on some brick.

From these definitions it directly follows that file system block can not contain more than one stripe. On the other hand, an allocated stripe can occupy many blocks.

For any file system block its full address in a logical volume is defined as a pair (brick-ID, disk-address).

Stripe is said dispatched, if it got the first component (brick-ID) of its full address in the logical volume.

Stripe is said migrated, if its old disk addresses got released, and new ones (possibly on another brick) got allocated.

The core difference between parallel and non-parallel scaling out in terms of distribution and allocation: In PSO-systems any stripe firstly gets distributed, then allocated. In systems with non-parallel scaling out it is other way around - any stripe firstly gets allocated, then distributed. An example is any local FS built a top of RAID-0 array. Indeed, at first, such FS allocates a virtual disk address for a logical block, then block layer assigns a real device-ID and translates that virtual address to real one.

Data distribution and migration.

Fiber-Striping. Burst Buffers.Distribution defines what device-component of a logical volume an IO request composed for a dirty buffer(page) will be issued against.

In file systems with PSO "destination" device is always defined by a virtual disk address allocated for that request. E.g. for RAID-0 ID of

destination device is defined as (N % M), where N is a virtual address (block number), allocated by the file system, M is number of disks in the array.In our approach (O(1) space allocator) we allocate disk addresses on each physical device independently, so for every IO-request we first need to assign a destination device, then ask a block allocator managing that device to allocate a block number for this request. So, in our approach distribution doesn't depend on allocation.

By default Reiser5 offers distribution based on algorithms (so-called fiber-striping) invented by Eduard Shishkin (patented stuff). With our algorithms all your data will be distributed evenly and fairly among all devices-components of the logical volume. It means that portion of IO requests issued against each device is equal to relative capacity of that device assigned by user. Operation of adding/removing a device to/from a logical volume automatically invokes data migration, so that resulted distribution is also fair. Portion of migrated data is always equal to relative capacity of the added/removed device. The speed of data migration is mostly determined by throughput of the device to be added/removed.

Alternatively, Reiser5 allows users to control data distribution and migration themselves. The most important application the user-defined distribution and migration find in HPC area as so-called Burst Buffers (dump of "hot data" on high-performance proxy-device with its following migration to "persistent storage" in background mode).

In all cases the file system memorizes stripes location.

Atomicity of volume operations

Almost all volume operations (adding/removing a brick, changing bricks capacity, etc) involve re-balancing (i.e. massive migration of data blocks), so it is technically difficult to implement full atomicity of such operations. Instead, we issue 2 checkpoints (first before re-balancing, second - after), and handle 2 cases depending on where in relation to those points the volume operation was interrupted. In the first case user should repeat the operation again, in the second case user should complete the operation (in the background mode) using volume.reiser4 utility. See administration guide on reiser4 logical volumes for details.

Limitations on asymmetric logical volumes

Maximal number of bricks in a logical volume:

. in the "builtin" distribution mode - 2^32

. in the "custom" distribution mode - 2^64In the "builtin" distribution mode any 2 bricks of the same logical volume can not differ in size more than 2^19 (~1 million) times. For example, your logical volume can not contain both, 1M and 2T bricks.

Maximal number of stripe pointers held by one 4K-metadata block: 75 (for node40 format).

Maximal number of data blocks served by 1 meta-data block: 75*S, where S is stripe width in file system blocks. For example, for 128K-stripes and 4K blocks (S=32) one meta-data block can serve not more than 2400 data blocks. In particular, when all bricks are of equal capacity, it means that one meta-data brick can serve not more than 2400 data bricks.

For the best quality of "builtin" distribution it is recommended that:

- a) stripe size is not larger than 1/10000 of total volume size.

- b) number of bricks in your logical volume is a power of 2 (i.e. 2, 4, 8, 16, etc). If you cannot afford it, then make sure that number of hash space segments (a property of your logical volume, which can be increased online) is not smaller than 100 * number-of-bricks.

Not more than one volume operations on the same logical volume can be executed in parallel. If some volume operation is not completed, then attempts to execute other ones will return error (EBUSY).

Security issues

"Builtin" distribution combines random and deterministic methods. It is "salted" with volume-ID, which is known only to root. Once it is compromised (revealed), the logical volume can be subjected to "free space attack" - with known volume-ID an attacker (non-privileged user) will be able to fill some data brick up to 100%, while others have a lot of free space. Thus, nobody will be able to write anymore to that volume. So, keep your volume-ID a secret!

Software and Disk Version 5.1.3.

CompatibilityTo implement parallel scaling out we upgraded Reiser4 code base with the following new plugins:

- ) "asymmetric" volume plugin (new interface);

- ) "fsx32" distribution plugin (new interface);

- ) "striped" file plugin (existing interface);

- ) "extent41" item plugin (existing interface);

- ) "format41" disk format plugin (existing interface).

In the best traditions we increment version numbers. The old disk and software version was 4.0.2. "Minor" number (2) is incremented because of (1-4). "Major" number (0) is incremented because of (5) and changes in the format super-block. "Principal" number (4) is incremented because of changes in master super-block. For more details about compatibility see Reiser4 development model

Old reiser4 partitions (of format 4.0.X) will be supported by Reiser5 kernel module. For this you need to enable option "support "Plan-A key allocation scheme" (not default), when configuring the kernel. Note that it will automatically disable support of logical volumes. Such mutual exclusiveness is due to performance reasons.

Reiser4progs of software release number 5.X.Y don't support old reiser4 partitions of format 4.0.X. To fsck the last ones use reiser4progs of software release number 4.X.Y - it will exist as a separate branch.

TODO

- Interface for user-defined data distribution and migration (Burst Buffers);

- Upgrading FSCK to work with logical volumes;

- Asymmetric LV w/ more than 1 meta-data brick per-volume;

- Symmetric logical volumes (meta-data on all bricks);

- 3D-snapshots of LV (snapshots with an ability to roll back not only file operations, but also volume operations);

- Global (networking) logical volumes.

============================= APPENDIX =============================

The most recent version of this document will be available here: Logical Volumes Administration

Reiser5 logical volumes with builtin fair distribution and transparent data migration capabilities.

Administration guide - getting started.

Logical volume (LV) can be composed of any number of block devices, different in physical and geometric parameters. However the optimal configuration (true parallelism) imposes some restrictions and dependencies on the size of such devices.WARNING: The stuff is not stable. Don't put important data to logical volumes managed by software of release number 5.X.Y

IMPORTANT: Currently there is no tools to manage Reiser5 logical volumes off-line, so it it strongly recommended to save/update

configurations of your LV in a file, which doesn't belong to that volume.

1. Basic definitions.

Volume configuration. Brick's capacity.

Partitioning. Fair distribution. Balancing

Basic configuration of a logical volume is the following information:- ) Volume UUID;

- ) Number of bricks in the volume;

- ) List of brick names or UUIDs in the volume;

- ) UUID or name of the brick to be added/removed (if any). That brick is not counted in (2) and (3).

For each volume its configuration should be stored somewhere (but not on that volume!) and properly updated before and after each volume operation performed on that volume. We make the user responsible for this. Volume configuration is needed to facilitate deploying a volume.

Abstract capacity (or simply capacity) of a brick is a positive integer number. Capacity is a brick's property defined by user. Don't confuse it with the size of block device. Think of it as of brick's "weight" in some units. And this is the user, who decides, which property of the brick to assign as its abstract capacity and in which units. In particular, it can be size of the block device in kilobytes, or its size in megabytes, or its throughput in M/sec, or other geometric or physical parameter of the device, associated with the brick. It is important that capacities of all bricks of the same logical volume are measured in the same units. Also, it would be utterly pointless to assign different properties as abstract capacities for bricks of the same LV. For example, size of block device for one brick, and disk bandwidth for another one.

Capacity of each brick gets initialized by mkfs utility. By default it is calculated as number of free blocks on the device at the very end of the formatting procedure. For meta-data brick it is calculated as 70% of such amount. Capacity of any brick can be changed on-line by user.

Capacity of a logical volume is defined as a sum of capacities of its bricks-components.

Relative capacity of a brick is the ratio of brick's capacity to volume's capacity. Relative capacity defines a portion of IO-requests that will be issued against that brick.

Array of relative capacities (C1, C2, ...) of all bricks is called volume partitioning. Obviously, C1 + C2 + ... = 1.

(Real) data space usage on a brick is number of data blocks, stored on that brick.

Ideal (or expected) data space usage on a brick is T*C, where T is total number of data blocks stored in the volume. C is relative capacity of the brick.

It is recommended to compose volumes in the way so that space-based partitioning coincides with throughput-based one - it would be the optimal volume configuration, which provides true parallelism. If it is impossible for some reason, then choose a preferred partitioning method (space-based, or throughput-based). Note that space-based partitioning saves volume space, whereas throughput based one saves volume throughput.

When performing regular file operations, Reiser5 distributes data stripes throughout the volume evenly and fairly. It means that portion of IO-requests issued against each brick is equal to its relative capacity, that is, to the portion of capacity that the brick adds to the total volume's capacity.

Most volume operations are accompanied by rebalancing, which keeps fairness of distribution. For example, adding a brick to a logical

volume changes its partitioning, and hence, breaks fairness of the distribution, so we need to move some data stripes to the new brick to make distribution fair. Also you can not simply remove a brick from a logical volume - all data stripes should be moved from that brick to other bricks of the logical volume.Every time when user performs a volume operation, Reiser5 marks LV as "not balanced". After successful balancing the status of LV is changed to "balanced". If balancing procedure fails for some reasons, it should be resumed manually (with volume.reiser4 utility).

It is allowed to perform regular file operations on not balanced LV. However, in this case:

- a) we don't guarantee a good quality of data distribution on your LV.

- b) you won't be able to perform volume operations on your LV except balancing - any other volume operation will return error (EBUSY).

So, don't forget to bring your LV to the balanced state as soon as possible!

2. Prepare Software and Hardware

Build, install and boot kernel with Reiser4 of software framework release number 5.X.Y. Kernel patches can be found here: Reiser4 file system for Linux OS: v5-unstable kernel

Note that by Linux kernel and GNU utilities the testing stuff is still recognized as "Reiser4". Make sure there is the following message in kernel logs:

"Loading Reiser4 (Software Framework Release: 5.X.Y)"

Build and install the latest libaal library: Reiser4 file system for Linux OS: v5-unstable libaal

Download, build and install the latest version 2.A.B of Reiser4progs package: Reiser4 file system for Linux OS: v5-unstable reiser4progs

Make sure that utility for managing logical volumes is installed (as a part of reiser4progs package) on your machine:

# volume.reiser4 -?

3. Creating a logical volume

Start from choosing a unique ID (uuid) of your volume. By default it is generated by mkfs utility. However, user can generate it himself by proper tools (e.g. uuid(1)) and store in an environment variable for convenience:

# VOL_ID=`uuid -v4`

# echo "Using uuid $VOL_ID"Choose a stripe size for your logical volume. For a good quality of distribution it is recommended that stripe doesn't exceed 1/10000 of

volume size. On the other hand, too small stripes will increase space consumption on your meta-data brick. In our example we choose stripe size 256K.Start from creating the first brick of your volume - meta-data brick, passing volume-ID and stripe size to mkfs.reiser4 utility:

# mkfs.reiser4 -U $VOL_ID -t 256K /dev/vdb1

Currently only one meta-data brick per volume is supported, so it is recommended that size of block device for meta-data brick in not too small. In most cases it will be enough, if your meta-data brick is not smaller than 1/200 of maximal volume size. For example, 100G meta-data brick will be able to service ~20T logical volume.

Mount your logical volume consisting of one meta-data brick:

# mount /dev/vdb1 /mnt

Find a record about your volume in the output of the following command:

# volume.reiser4 -l

Create configuration of your logical volume (its definition is above) and store it somewhere, but not on that volume!

Your logical volume is now on-line and ready to use. You can perform regular file operations and volume operations (e.g. add a data brick to your LV).

4. Adding a data brick to LV.

At any time you are able to add a data brick to your LV. You can do it in parallel with regular file operations executing on this volume. Make sure, however, that there is no other volume operations (e.g. removing a brick) over your volume in progress, otherwise your operation will fail with EBUSY.

Obviously, adding a brick will increase capacity of your volume.

Choose a block device for the new data brick. Make sure that it is not too large, or too small. Capacities of any 2 bricks of the same logical volume can not differ more than 2^19 (~1 million) times. E.g. your logical volume can not contain both, 1M and 2T bricks. Any attempts to add a brick of improper capacity will fail with error.

Format it by the same way as meta-data brick, but specify also "-a" option (to let mkfs know that it is data brick).

# mkfs.reiser4 -U $VOL_ID -t 256K -a /dev/vdb2

Important: make sure you specified the same volume ID and stripe size as other bricks of the logical volume do have. Otherwise, operation of adding a data brick will fail.

Update configuration of your volume with UUID or name of the brick you want to add (item #4).

To add a brick simply pass its name as an argument for the option "-a" and specify your LV via its mount point:

# volume.reiser4 -a /dev/vdb2 /mnt

The procedure of adding a brick automatically invokes re-balancing, which moves a portion of data stripes to the newly added brick (so

that the resulted distribution will fair).Portion of data blocks moved during such rebalancing is equal to the relative capacity of the new brick, that is to the portion of capacity that the new brick adds to updated LV's capacity. This important property defines the cost of balancing procedure. If the portion of capacity added by a brick is small, then number of stripes moved during balancing is also small.

Like other user-space utilities, the operation of adding a brick can return error, even in the assumption that the brick you wanted to add is properly formatted. In this case check the status of your LV:

# volume.reiser4 /mnt

If the volume is unbalanced, then simply complete balancing manually:

# volume.reiser4 -b /mnt

Otherwise, check number of bricks in your LV. Most likely that it is the same as it was before the failed operation. In this case simply repeat the operation of adding a brick from scratch.

Upon successful completion update your volume configuration. That is, increment (#2), add info about the new brick to (#3) and remove records at (#4).

5. Removing a data brick from LV

At any time you are able to remove a data brick from your LV. You can do it in parallel with regular file operations executing on this volume. Make sure, however, that there is no other volume operations (e.g. adding a brick) over your volume in progress, otherwise your operation will fail with EBUSY.

Obviously, removing a brick will decrease abstract capacity of your LV. Note that other bricks should have enough space to store all data

blocks of the brick you want to remove, otherwise, the removal operation will return error (ENOSPC).Suppose you want to remove brick /dev/vdb2 from your LV mounted at /mnt.

Update your volume configuration with the UUID and name of the brick you want to remove (#item #4).

To remove a brick simply pass its name as an argument for option "-r" and specify the logical volume by its mount point:

# volume.reiser4 -r /dev/vdb2 /mnt

The procedure of brick removal automatically invokes re-balancing, which distributes data of the brick to be removed among other bricks, so that resulted distribution is also fair. Portion of data stripes moved during such rebalancing is equal to the relative capacity of the brick to be removed (that it to the portion of capacity that the brick added to LV's capacity).

It can happen, that the command above completes with error (like other user-space applications). In this case check the status of your LV:

# volume.reiser4 /mnt

If volume is not balanced, then simply complete balancing manually:

# volume.reiser4 -b /mnt

Otherwise, check the number of the bricks in your logical volume - it should be the same as before the failed operation. The error -ENOSPC indicates that free space on other bricks is not enough to fit all the data of the brick you want to remove.

On success update your volume configuration: remove information about the removed brick at #3 and #4.

6. Changing brick's capacity

At any time (in the assumption that no other volume operation is in progress) you can change abstract capacity of any brick to some new value, different from 0. Changing capacity always changes volume partitioning, and therefore, breaks fairness of distribution, so Reiser5 automatically launches rebalancing to make sure that resulted distribution is fair for the new set of capacities.

In particular, increasing bricks capacity will move some data from other bricks to the brick, which capacity was increased. Decreasing bricks capacity will move some data from the brick, which capacity was decreased, to other bricks.

To change abstract capacity of a brick /dev/vdb1 to a new value (e.g. 200000), simply run

# volume.reiser4 -z /dev/vdb1 -c 200000 /mnt

Pronounced as "resize brick /dev/vdb1 to new capacity 200000 in volume mounted at /mnt".

The operation of changing capacity can return error. Most likely, it is -ENOSPC, which is a side effect of concurrent regular file writes. In this case check the status of your LV. If it is unbalanced, then consider removing some files from your LV and complete balancing by running

# volume.reiser4 -b /mnt

Otherwise, repeat the operation from scratch.

Comment. Changing bricks capacity to 0 is undefined and will return error. Consider brick removal operation instead.

7. Operations with meta-data brick

Meta-data brick can also contain data stripes and participate in data distribution like other data bricks. So that all the volume operations described above are also applicable to meta-data brick. Note, however, that it is impossible to completely remove meta-data brick from the logical volume for obvious reasons (meta-data need to be stored somewhere), so brick removal operation applied to the meta-data brick actually removes it from Data-Storage Array (DSA), not from the logical volume. DSA is a subset of LV consisting of bricks, participating in data distribution. Once you remove meta-data brick from DSA, that brick will be used only to store meta-data. Operation of adding a brick, being applied to a meta-data brick, returns the last one back to DSA.

Important: Reiser5 doesn't count busy data and meta-data blocks separately. So in contrast with data bricks (which contain only data) you are not able to find out real space occupied by data blocks on the meta-data brick - Reiser5 knows only total space occupied.

To check the status of meta-data brick sumply run

# volume.reiser4 /mnt

and compare values of "bricks total" and "bricks in DSA". If they are equal, then meta-data brick participates in data distribution. Otherwise, "bricks total" should be 1 more than "bricks in DSA" - it indicates that meta-data brick doesn't participate in data distribution (and therefore, doesn't contain data blocks). Note that other cases are impossible: for data bricks participation in LV and DSA is always equivalent.

8. Unmounting a logical volume

To terminate a mount session just issue usual umount command with the mount point specified.

Note that after unmounting the volume all bricks by default remain to be registered in the system till system shutdown. If you want to

unregister a brick before system shutdown, then simply issue the following command:# volume.reiser4 -u BRICK_NAME

9. Deploying a logical volume

after correct unmountMake sure (by checking your volume configuration) that all bricks of the volume are registered in the system. The list of all volumes and

bricks registered in the system can be found in the output of the following command:# volume.reisrer4 -l

Issue usual mount command against one of the bricks of your volume. It is recommended to specify meta-data brick in the mount command. If not all bricks of the volume are registered, then attempts to mount such volume will fail with a respective kernel message.

NOTE: Reiser5 will refuse to mount a logical volume, in the case, when a wrong set of bricks is registered in the system. It can happen due to careless handling of off-line volumes, leading to the appearance of "artifacts" in the list of registered bricks. If you want to re-format a brick, make sure it is unregistered.

10. Deploying a logical volume

after correct shutdownTo be able to mount your LV make sure that all its bricks (data and meta-data) are registered in the system. If not all bricks of the volume are registered, then attempts to mount such volume will fail with a respective kernel message. For this reasons we strongly recommend for user to keep a track of his LV - store its configuration somewhere, but not in this volume! And don't forget to update that configuration after _every_ volume operation. If you lost configuration of your LV and don't remember it (wich is most likely for large volumes), then it will be rather painful to restore it: currently there is no tools for to manage offline logical volumes. So that, users are prompted to do this on their own. It is not at all difficult.

To register a brick in the system use the following command:

# volume.reiser4 -g BRICK_NAME

To print a list of all registered bricks use

# volume.reiser4 -l

To mount your LV just issue a mount command for any one brick of your LV.

Comment. Reiser5 always tries to register the brick which is passed to the mount command as an argument, so it is not needed to reregister bricks you want to issue a mount command against.

11. Deploying a logical volume

after hard reset or system crashIf no volume operations were interrupted by hard reset or system crash, then just follow the instructions in section 9.

In Reiser5 only restricted number of bricks participate in every transaction. Maximal number of such bricks can be specified by user. At mount time a transaction replay procedure will be launched on each such brick independently in parallel.

Depending on a kind of interrupted volume operation, perform one of the following actions:

a. Adding a brick was interrupted.

Check your volume configuration. Register the old set of bricks (that is, the set of brick that the volume had before applying the operation) and try to mount. In the case of error register also the brick you wanted to add and try to mount again.

Check the status of your LV by running

# volume.reiser4 /mnt

In the volume is unbalanced, then complete balancing manually by running

# volume.reiser4 -b /mnt

Check "bricks total" of your LV in the output of

# volume.reiser4 /mnt

Compare it with the old number of bricks in the configuration. The new value should be an increment of the old one. If the number of bricks is the same, then your operation of adding a brick was completely rolled back by the transaction manager, so that you need to repeat it from scratch. Otherwise, your operation was successfully completed - update your volume configuration respectively.

b. Brick removal was interrupted.

Check your volume configuration. Register the old set of bricks (that is, the set of brick that volume had before applying the interrupted

operation) except the brick you wanted to remove. Try to mount the volume. In the case of error register also the brick you wanted to

remove and try to mount again.Check the status of your LV:

# volume.reiser4 /mnt

If the volume is unbalanced then complete balancing manually by running

# volume.reiser4 -b /mnt

Comment. After successful balancing completion the brick will be automatically removed form the volume. Make sure of it by checking status of your LV:

# volume.reiser4 /mnt

Update your volume configuration respectively.

c. Another volume operation was interrupted

Using the volume configuration, register the new set of bricks and try to mount the volume. The mount should be successful.

Check the status of your LV:

# volume.reiser4 /mnt

If the volume is unbalanced then complete balancing manually by running

# volume.reiser4 -b /mnt

12. LV monitoring.

Common info about LV mounted at /mnt

# volume.reiser4 /mnt

ID: Volume UUID

volume: ID of plugin managing the volume

distribution: ID of distribution plugin

stripe: Stripe size in bytes

segments: Number of hash space segments (for distribution)

bricks total: Total number of bricks in the volume

bricks in DSA: Number of bricks participating in data distribution

balanced: Balanced status of the volumeInfo about any its brick of index J

# volume.reiser4 -p J /mnt

internal ID: Brick's "internal ID" and its status in the volume

external ID: Brick's UUID

device name: Name of the block device associated with the brick

block count: Size of the block device in blocks

blocks used: Total number of occupied blocks on the device

system blocks: Minimal possible number of busy blocks on that device

data capacity: Abstract capacity of the brick

space usage: Portion of occupied blocks on the deviceComment. When retrieving brick's info make sure that no volume operations over that volume are in progress. Otherwise the command

above will return error (EBUSY).WARNING. Bricks info provided by such way is not necessarily the most recent one. To get an actual info run sync(1) and make sure that no regular file operations are in progress.

13. Checking free space

To check number of available free blocks on a volume mounted at /mnt, make sure that no regular file operations, as well as volume

operations, are in progress on that volume, then run# sync

# df --block-size=4K /mntTo check number of free blocks on the brick of index J run

# volume.reiser4 -p J /mnt

Then calculate the difference between block count and blocks used

Comment. Not all free blocks on a brick/volume are available for use. Number of available free blocks is always ~95% of total number of free blocks (Reiser4 reserves 5% to make sure that regular file truncate operations won't fail).

NOTE: volume.reiser4 shows total number of free blocks, whereas df(1) shows number of available free blocks.

"Space usage" statistics shows a portion of busy blocks on individual brick. For the reasons explained above "space usage" on any brick can not be more than 0.95

14. Checking quality of data distribution

Quality of data distribution is a measure of deviation of the real data space usage from the ideal one defined by volume partitioning. The smaller the deviation, the better the distribution quality.

Checking quality of distribution makes sense only in the case when your volume partitioning is space-based, or if it coincides with the

space-based one.If your partitioning is throughput-based, and it doesn't coincide with the space-based one, then quality of actual data distribution can be rather bad, as in this case the file system is worried for low-performance devices to not become a bottleneck, and effective space usage in this case is not a high priority.

Checking quality of data distribution is based on the free blocks accounting, provided by the file system. Note that file system doesn't count busy data and meta-data blocks separately, so you are not able to find real data space usage, and hence to check quality of distribution in the case when meta-data brick contains data blocks.

To check quality of distribution

- ) make sure that meta-data brick doesn't contain data blocks;

- ) make sure that no regular file and volume operations are currently in progress;

- ) find "blocks used", "system blocks" and "data capacity" statistics for each data brick:

# sync

# volume.reiser4 -p 1 /mnt

...

# volume.reiser4 -p N /mnt- 4) find real data space usage on each brick;

- 5) calculate partitioning and ideal data space usage on each data brick;

- 6) find deviation of (4) from (5).

Example.

Let' build a LV of 3 bricks (one 10G meta-data brick sdb1, and two data bricks: sdc1 (10G), sdd1(5G)) with space-based partitioning:

# VOL_ID=`uuid -v4`

# echo "Using uuid $VOL_ID"# mkfs.reiser4 -U $VOL_ID -y -t 256K /dev/vdb1

# mkfs.reiser4 -U $VOL_ID -y -a -t 256K /dev/vdc1

# mkfs.reiser4 -U $VOL_ID -y -a -t 256K /dev/vdd1# mount /dev/vdb1 /mnt

Fill the meta-data brick with data:

# dd if=/dev/zero of=/mnt/myfile bs=256K

No space left on device...

Add data-bricks /dev/sdc1 and dev/sdd1 to the volume:

# volume.reiser4 -a /dev/vdc1 /mnt

# volume.reiser4 -a /dev/vdd1 /mntMove all data blocks to the newly added bricks:

# volume.reiser4 -r /dev/vdb1 /mnt

# syncNow meta-data brick doesn't contain data blocks (only meta-data ones), so that we can calculate quality of data distribution

# volume.reiser4 /mnt -p0

blocks used: 503# volume.reiser4 /mnt -p1

blocks used: 1657203

system blocks: 115

data capacity: 2621069# volume.reiser4 /mnt -p2

blocks used: 833001

system blocks: 73

data capacity: 1310391Basing on the statistics above calculate quality of distribution.

Total data capacity of the volume: 2621069 + 1310391 = 3931460

Relative capacities of data bricks:C1 = 2621069 /(2621069 + 1310391) = 0.6667

C2 = 1310464 /(2621069 + 1310391) = 0.3333Real space usage on data bricks (blocks used - system blocks):

R1 = 1657203 - 115 = 1657088

R2 = 833001 - 73 = 832928Real space usage on the volume:

R = R1 + R2 = 1657088 + 832928 = 2490016

Ideal data space usage on data bricks:

I1 = C1 * T = 0.6667 * 2490016 = 1660094

I2 = C2 * T = 0.3333 * 2490016 = 829922Deviation: D = (R1, R2) - (I1, I2) = (3006, -3006)

Relative deviation: D/R = (-0.0012, 0.0012)

Quality of distribution:

Q = 1 - max(|D1|, |D1|) = 1 - 0.0012 = 0.9988

Comment. For any specified number of bricks N and quality of distribution Q it is possible to find a configuration of a logical volume composed of N bricks, so that quality of distribution on that volume will be better than Q.

Comment. Quality of distribution Q doesn't depend on the number of bricks in the logical volume. This is a theorem, which can be strictly proven.

2From: Edward Shishkin <edward.shishkin@gmail.com>

Date: Tue, 31 Dec 2019 14:53:45 +0100

Subject: [ANNOUNCE] Reiser5 (Format Release 5.X.Y)

To: ReiserFS development mailing list <reiserfs-devel@vger.kernel.org>, linux-kernel <linux-kernel@vger.kernel.org>

Reiser5: Data Tiering. Burst Buffers. Speedup synchronous modifications3

Dumping peaks of IO load to a proxy device

Now you can add a small high-performance block device to your large logical volume composed of relatively slow commodity disks and get an impression that the whole your volume has throughput which is as high, as the one of that proxy device!

This is based on a simple observation that in real life IO load is going by peaks, and the idea is to dump those peaks to a high-performance proxy device. Usually you have enough time between peaks to flush the proxy device, that is, to migrate the hot data from the proxy device to slow media in background mode, so that your proxy device is always ready to accept a new portion of peaks.

Such technique, which is also known as Burst Buffers, initially appeared in the area of HPC. Despite this fact, it is also important for usual applications. In particular, it allows to speedup the ones, which perform so-called atomic updates.

Speedup atomic updates in user-space

There is a whole class of applications with high requirements to data integrity. Such applications (typically data bases) want to be sure that any data modifications either complete, or they don't. And they don't appear as partially occurred. Some applications has weaker requirements: with some restrictions they accept also partially occurred modifications.

Atomic updates in user space are performed via a sequence of 3 steps. Suppose you need to modify data of some file foo in an atomic way. For this you need to:

- write a new temporary file foo.tmp with modified data

- issue fsync(2) against foo.tmp

- rename foo.tmp to foo.

At step 1 the file system populates page cache with new data. At step 2 the file system allocates disk addresses for all logical blocks of the file foo.tmp and writes that file to disk. At step 3 all blocks containing old data get released.

Note that steps 2 and 3 become a reason of essential performance drop on slow media. The situation gets improved, when all dirty data rewritten to a dedicated high-performance proxy-disk, which exactly happens in a file system with Burst Buffers support.

Speedup all synchronous modifications (TODO)

Burst Buffers and transaction managerNot only dirty data pages, but also dirty meta-data pages can be dumped to the proxy-device, so that step (3) above also won't contribute to the performance drop.

Moreover, not only new logical data blocks can be dumped to the proxy disk. All dirty data pages, including ones, which already have location on the main (slow) storage can also be relocated to the proxy disk, thus, speeding up synchronous modification of files in all cases (not only in atomic updates via write-fsync-rename sequence described above).

Indeed, let's remind that any modified page is always written to disk in a context of committing some transaction. Depending on the commit strategy (there are 2 ones relocate and overwrite), for each such modified dirty page there are only 2 possibility:

- a) to be written right away to a new location,

- b) to be written first to a temporary location (journal), then to be written back to permanent location.

With Burst buffers support in the case (a) the file system writes dirty page right away to the proxy device. Then user should take care to migrate it back to the permanent storage (see section Flushing proxy devise below). In the case (b) the modified copy will be written to the proxy device (wandering logs), then at checkpoint time (playing a transaction) reiser4 transaction manager will write it to the permanent location (on commodity disks). In this case user doesn't need to worry on flushing proxy device, however, the procedure of commit takes more time, as user should also wait for checkpoint completion.

So from the standpoint of performance write-anywhere transaction model (reiser4 mount option "txmod=wa") is more preferable then journalling model (txmod=journal), or even hybrid model (txmod=hybrid)

Predictable and non-predictable migration

Meta-data migrationAs we already mentioned, not only dirty data pages, but also dirty meta-data pages can be dumped to the proxy-device. Note, however, that not predictable meta-data migration is not possible because of chicken-eggish problem. Indeed, non-predictable migration means that nobody knows, on what device of your logical volume a stripe of data will be relocated in the future. Such migration requires to record location of data stripes. Now note, that such records is always a part of meta-data. Hence, you are now able to migrate meta-data in non-predictable way.

However, it is perfectly possible to distribute/migrate meta-data in a predictable way (it will be supported in so-called symmetric logical volumes - currently not implemented). Classic example of predictable migration is RAID arrays (once you add, or remove a device to/from the array, all data blocks migrate in predictable way during rebalancing). If relocation is predictable, then it is not need to record locations of data stripes - it can always be calculated.

Thus, non-predictable migration is applicable to data only.

Definition of data tiering.

Using proxy device to store hot data (TODO)Now we can precisely define tiering as (meta-)data relocation in accordance with some strategy (automatic, or user-defined), so that every relocated unit always gets location on another device-component of the logical volume.

During such relocation block number B1 on device D1 gets released, first address component is changed to D2, second component is changed to 0 (which indicates not allocated block number), then the file system allocates block number B2 on device D2:

(D1, B1) -> (D2, 0) -> (D2, B2)

Note that tiering is not defined for simple volumes (i.e. volumes, consisting only of one device). Blocks relocation within one device is always in a competence of a file system (to be precisely, of block allocator.

Burst buffers is just one of strategies, in accordance with which all new logical blocks (optionally, all dirty pages) always get location on a dedicated proxy device. As we have figured out, Burst Buffers is useful for HPC applications, as well as for usual applications executing fsync(2) frequently.

There are other data tiering strategies, which can be useful for other class of applications. All of them can be easily implemented in Reiser5.

For example, you can use proxy device to store hot data only. With such strategy new logical blocks (which are always cold) will always go to the main storage (in contrast with Burst Buffers, where new logical blocks first get written to the proxy disk). Once in a while you need to scan your volume in order to push colder data out, and pull hotter data in the proxy disk. Reiser5 contains a common interface for this. It is possible to maintain per-file, or even per-blocks-extent temperature of data (e.g. as a generation counter), but we still don't have more or less satisfactory algorithms to determine critical temperature for pushing data in/out proxy disk.

Getting started with proxy disk over logical volume

Just follow the administration guide:

https://reiser4.wiki.kernel.org/index.php/Proxy_Device_AdministrationWARNING: THE STUFF IS NOT STABLE! Don't store important data on Reiser5 logical volumes till beta-stability announcement.

1 https://lkml.org/lkml/2018/10/23/188

3From: Edward Shishkin <edward.shishkin@gmail.com>

Date: Mon, May 25, 2020 at 6:08 PM

Subject: [ANNOUNCE] Reiser5: Data Tiering. Burst Buffers. Speedup synchronous modifications

To: ReiserFS development mailing list <reiserfs-devel@vger.kernel.org>, linux-kernel <linux-kernel@vger.kernel.org>